and here we see the native-to-LA fire truck defending its territory by threatening the 350Z invader species

and here we see the native-to-LA fire truck defending its territory by threatening the 350Z invader species

worldwide toilet paper shortage due to Firefox DNS over HTTP rollout, in this paper if you hear me out i will

nothing says you care deeply for the recently bereaved like looking to hire a CASUAL FUNERAL SERVICE *OPERATIVE*

i decided to make my G Suite “mdmtool” a bit more robust and release-worthy, instead of just proof of concept code.

(link: https://github.com/rickt/mdmtool) github.com/rickt/mdmtool.

enjoy!

Our desktop support & G Suite admin folks needed a simple, fast command-line tool to query basic info about our company’s mobile devices (which are all managed using G Suite’s built-in MDM).

So I wrote one.

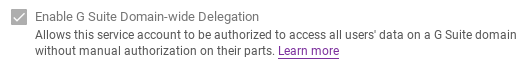

Since this tool needs to be run via command-line, it can’t use any interactive or browser-based authentication, so we need to use a service account for authentication.

Pre-requisites (GCP & G Suite):

client_id. Make a note of it!client_id of your service account for the necessary API scopes. In the Admin Console for your G Suite domain (Admin Console –> Security –> Advanced Settings –> Authentication –> Manage API Client Access), add your client_id in the “Client Name” box, and add https://www.googleapis.com/auth/admin.directory.device.mobile.readonlyin the “One or more API scopes” box

Pre-requisites (Go)

You’ll need to “go get” a few packages:

go get -u golang.org/x/oauth2/google

go get -u google.golang.org/api/admin/directory/v1

go get -u github.com/dustin/go-humanize

Pre-requisites (Environment Variables)

Because it’s never good to store runtime configuration within code, you’ll notice that the code references several environment variables. Setup them up to suit your preference but something like this will do:

export GSUITE_COMPANYID="A01234567" export SVC_ACCOUNT_CREDS_JSON="/home/rickt/dev/adminsdk/golang/creds.json export GSUITE_ADMINUSER="[email protected]"

And finally, the code

Gist URL: https://gist.github.com/rickt/199ca2be87522496e83de77bd5cd7db2

found an old photo from about TEN THOUSAND YEARS AGO when we were driving my mum’s 1966 MGB across the North Yorkshire moors on a snowy Boxing Day.

I wonder what “Howard” is up to these days? (the old MGB’s license plate was HWD777D so my mum called him “Howard”!)

just look at this FABULOUS cockpit on this STUNNING 73/74 Porsche Carrera RS

i wanted to try out the automatic loading of CSV data into Bigquery, specifically using a Cloud Function that would automatically run whenever a new CSV file was uploaded into a Google Cloud Storage bucket.

it worked like a champ. here’s what i did to PoC:

$ head -3 testdata.csv id,first_name,last_name,email,gender,ip_address 1,Andres,Berthot,[email protected],Male,104.204.241.0 2,Iris,Zwicker,[email protected],Female,61.87.224.4

$ gsutil mb gs://csvtestbucket

$ pip3 install google-cloud-bigquery --upgrade

$ bq mk --dataset rickts-dev-project:csvtestdataset

$ bq mk -t csvtestdataset.csvtable \ id:INTEGER,first_name:STRING,last_name:STRING,email:STRING,gender:STRING,ip_address:STRING

BUCKET: csvtestbucket DATASET: csvtestdataset TABLE: csvtable VERSION: v14

google-cloud google-cloud-bigquery

*csv *yaml

$ ls env.yaml main.py requirements.txt testdata.csv

$ gcloud beta functions deploy csv_loader \ --runtime=python37 \ --trigger-resource=gs://csvtestbucket \ --trigger-event=google.storage.object.finalize \ --entry-point=csv_loader \ --env-vars-file=env.yaml

$ gsutil cp testdata.csv gs://csvtestbucket/ Copying file://testdata.csv [Content-Type=text/csv]... - [1 files][ 60.4 KiB/ 60.4 KiB] Operation completed over 1 objects/60.4 KiB.

$ gcloud functions logs read [ ... snipped for brevity ... ] D csv_loader 274732139359754 2018-10-22 20:48:27.852 Function execution started I csv_loader 274732139359754 2018-10-22 20:48:28.492 Starting job 9ca2f39c-539f-454d-aa8e-3299bc9f7287 I csv_loader 274732139359754 2018-10-22 20:48:28.492 Function=csv_loader, Version=v14 I csv_loader 274732139359754 2018-10-22 20:48:28.492 File: testdata2.csv I csv_loader 274732139359754 2018-10-22 20:48:31.022 Job finished. I csv_loader 274732139359754 2018-10-22 20:48:31.136 Loaded 1000 rows. D csv_loader 274732139359754 2018-10-22 20:48:31.139 Function execution took 3288 ms, finished with status: 'ok'

looks like the function ran as expected!

$ bq show csvtestdataset.csvtable Table rickts-dev-project:csvtestdataset.csvtable Last modified Schema Total Rows Total Bytes Expiration Time Partitioning Labels ----------------- ----------------------- ------------ ------------- ------------ ------------------- -------- 22 Oct 13:48:29 |- id: integer 1000 70950 |- first_name: string |- last_name: string |- email: string |- gender: string |- ip_address: string

great! there are now 1000 rows. looking good.

$ egrep '^[1,2,3],' testdata.csv 1,Andres,Berthot,[email protected],Male,104.204.241.0 2,Iris,Zwicker,[email protected],Female,61.87.224.4 3,Aime,Gladdis,[email protected],Female,29.55.250.191

with the first 3 rows of the bigquery table

$ bq query 'select * from csvtestdataset.csvtable \ where id IN (1,2,3)' Waiting on bqjob_r6a3239576845ac4d_000001669d987208_1 ... (0s) Current status: DONE +----+------------+-----------+---------------------------+--------+---------------+ | id | first_name | last_name | email | gender | ip_address | +----+------------+-----------+---------------------------+--------+---------------+ | 1 | Andres | Berthot | [email protected] | Male | 104.204.241.0 | | 2 | Iris | Zwicker | [email protected] | Female | 61.87.224.4 | | 3 | Aime | Gladdis | [email protected] | Female | 29.55.250.191 | +----+------------+-----------+---------------------------+--------+---------------+

and whaddyaknow, they match! w00t!

proof of concept: complete!

conclusion: cloud functions are pretty great.

that this plate is on a gorgeous 1930’s Packard Twelve instead of some awful 30ft+ long stretched-and-bowing-in-the-middle prom night “limo”

fulfilled a life-long dream of going to the US Marines airshow at MCAS Miramar in San Diego.

it was fantastic.